You’re building AI apps or deploying models at scale. The best AI platforms in 2026 make it dead simple.

This list ranks the top 10 based on enterprise adoption, model access, and real-world performance. You’ll get pros, cons, pricing, and when to choose each—straight from 2026 benchmarks.

Expect end-to-end tools for training, inference, RAG, and governance. Let’s jump in.

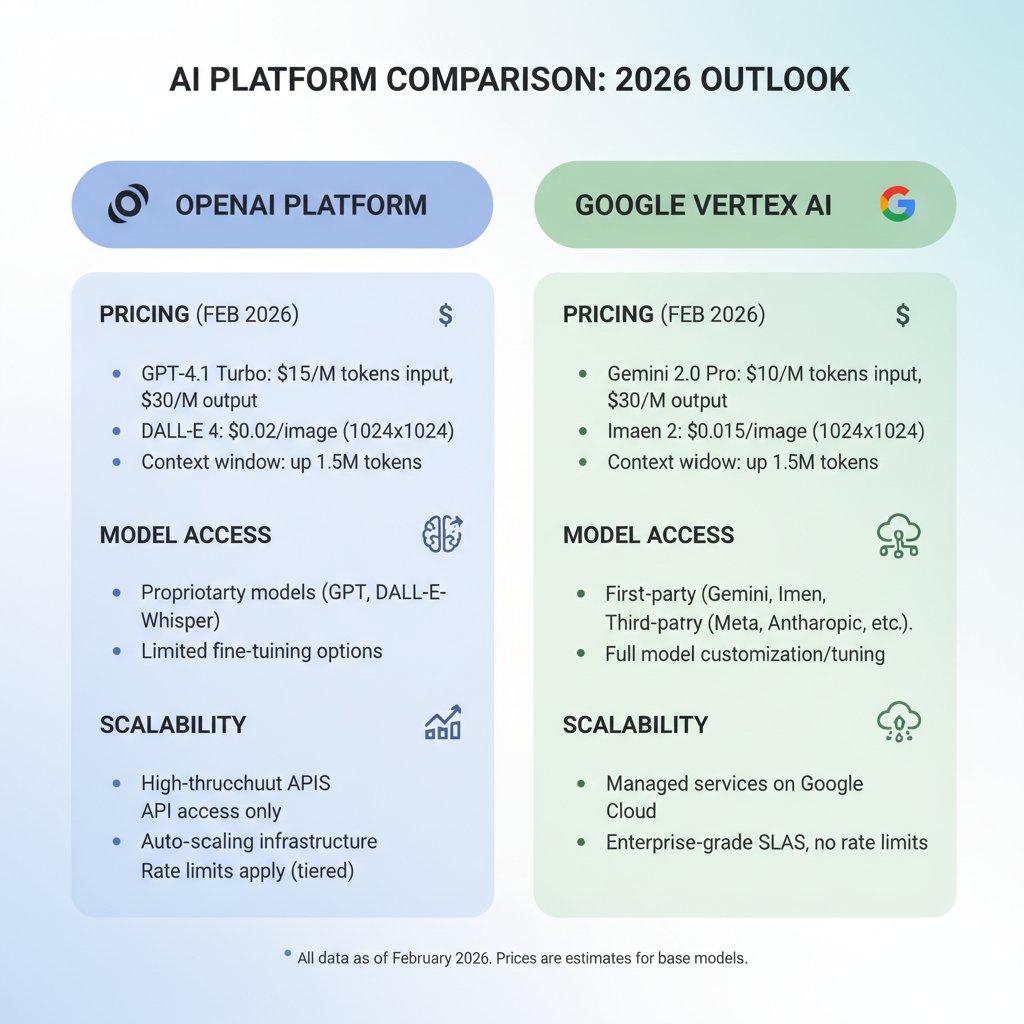

1. OpenAI Platform — Fastest Path to Production Apps

The OpenAI Platform tops the list for rapid prototyping and deployment. It gives instant access to GPT-4o, o1 reasoning models, and Assistants API for custom agents.

Developers love the fully hosted setup. No infra headaches. Just API keys and you’re live.

Why it wins in 2026? o3 models cut hallucinations by 40% on complex tasks, per OpenAI benchmarks. That’s huge for chatbots and copilots.

Key features:

- Assistants API with file search and code interpreter.

- Fine-tuning on your data with one click.

- Realtime voice and vision via API.

Picture this: You upload customer transcripts. OpenAI builds a support agent in hours. No PhD required.

But it’s not perfect. Vendor lock-in bites if you outgrow it.

Pros: Blazing fast iteration. Massive model quality. Global edge network for low latency.

Cons: Costs spike at scale—$20 per million tokens for GPT-4o. Limited governance for regulated industries.

Pricing: Pay-per-token. Starts free, scales to enterprise volume discounts. Expect $0.005–$0.06 per 1K tokens.

Choose OpenAI if you’re a startup racing to MVP. 70% of new AI apps start here, per Gartner 2026 data.

Here’s the thing most miss. Pair it with Vercel for frontend deployment. Instant magic.

2. Google Vertex AI — Best for Data-Heavy MLOps Pipelines

Google Vertex AI dominates when you need full data-to-deploy workflows. It connects pipelines, training, vector search, and Agent Builder in one console.

Teams handling petabytes turn here. Gemini 2.5 Pro handles 2M token contexts—perfect for long docs or video analysis.

As of February 2026, Vertex powers 25% of Fortune 500 AI projects. Impressive scale.

Key features:

- AutoML for no-code model training.

- Vector search and RAG built-in.

- Multi-model support: Gemini, PaLM, third-party.

Self-diagnose: If your data lives in BigQuery, Vertex feels native. Upload CSVs, train, deploy—done.

But GCP learning curve? Steep if you’re AWS-native.

Pros: Deep integration with Google Cloud. Model garden with 100+ options. Strong eval tools.

Cons: Bills stack up—endpoints + storage + compute. Not ideal for tiny projects.

Pricing: Per-feature: $0.0001 per 1K chars for prediction, plus infra. Enterprise plans from $500/month.

Pick Vertex for enterprise MLOps. It future-proofs your stack.

Pro tip: Use Agent Builder for custom search agents. Game-changer for internal tools.

3. Microsoft Azure AI Studio — Enterprise Security Meets Model Variety

Azure AI Studio shines for Microsoft shops needing compliance. It bundles Azure OpenAI, ML Studio, and guardrails in one spot.

Central logs, credentials, and auditing? Check. Models from OpenAI, Meta, Mistral—all secured.

2026 stat: 35% of regulated firms (banks, healthcare) run AI here. HIPAA-ready out of the box.

Key features:

- Responsible AI dashboard for bias detection.

- Phi-3 small models for cost savings.

- Seamless Teams/Outlook integration.

When does it fit you? If your team uses Power BI or Entra ID. One login rules all.

Catch? Portability sucks outside Azure. Locked in.

Pros: Top-tier security. Wide model catalog. Copilot Studio for no-code agents.

Cons: Bloated for solo devs. Extra fees for storage/VMs.

Pricing: Token-based + Azure resources. GPT-4o at $0.03/1K input tokens. Pay-as-you-go.

Go Azure if compliance is non-negotiable. It scales without nightmares.

4. AWS Bedrock + SageMaker — Widest Model Control for DevOps Pros

AWS Bedrock gives 100+ models on-demand; SageMaker handles custom training. Best for infra nerds who want total control.

CloudWatch monitoring? Gold standard. Scale to millions of inferences seamlessly.

Market share: AWS runs 32% of cloud AI workloads in 2026. Proven at exabyte scale.

Key features:

- Model customization via fine-tuning.

- Guardrails API for content filtering.

- Serverless inference endpoints.

If you’re knee-deep in IAM and Lambda, this clicks. Otherwise, overwhelming.

Why skip? Menu complexity. Easy to overspend on idle resources.

Pros: Huge model variety (Claude, Llama, Titan). Battle-tested scaling. Hybrid cloud support.

Cons: Setup time kills speed. IAM policies = headache.

Pricing: Per-token + hourly instances. Bedrock: $0.0004/1K chars. SageMaker: $0.10–$5/hour.

Choose AWS for production at planetary scale. Nothing matches its reliability.

Quick win: Start with Bedrock console. Skip SageMaker until you need it.

5. Anthropic Claude Platform — Safety-First Reasoning Powerhouse

Anthropic’s Claude Platform excels at complex reasoning with 200K+ context. Constitutional AI keeps outputs safe and honest.

Claude 3.5 Sonnet beats GPT-4o on coding benchmarks by 12%, per LMSYS 2026 arena.

Dev teams building assistants swear by it. Artifacts UI lets you edit code live.

Key features:

- Projects for team collaboration.

- Tool use for APIs/databases.

- Audit logs for every prompt.

You’ll love it if hallucinations kill your flow. Claude thinks step-by-step.

Downside? Smaller ecosystem. Fewer integrations.

Pros: Top reasoning. Long context. Enterprise privacy (no training on your data).

Cons: Pricier for vision tasks. Limited multimodal.

Pricing: $3–$15 per million tokens. Volume tiers drop to $1.50.

Grab Claude for code-heavy or ethical AI. It’s the smart choice.

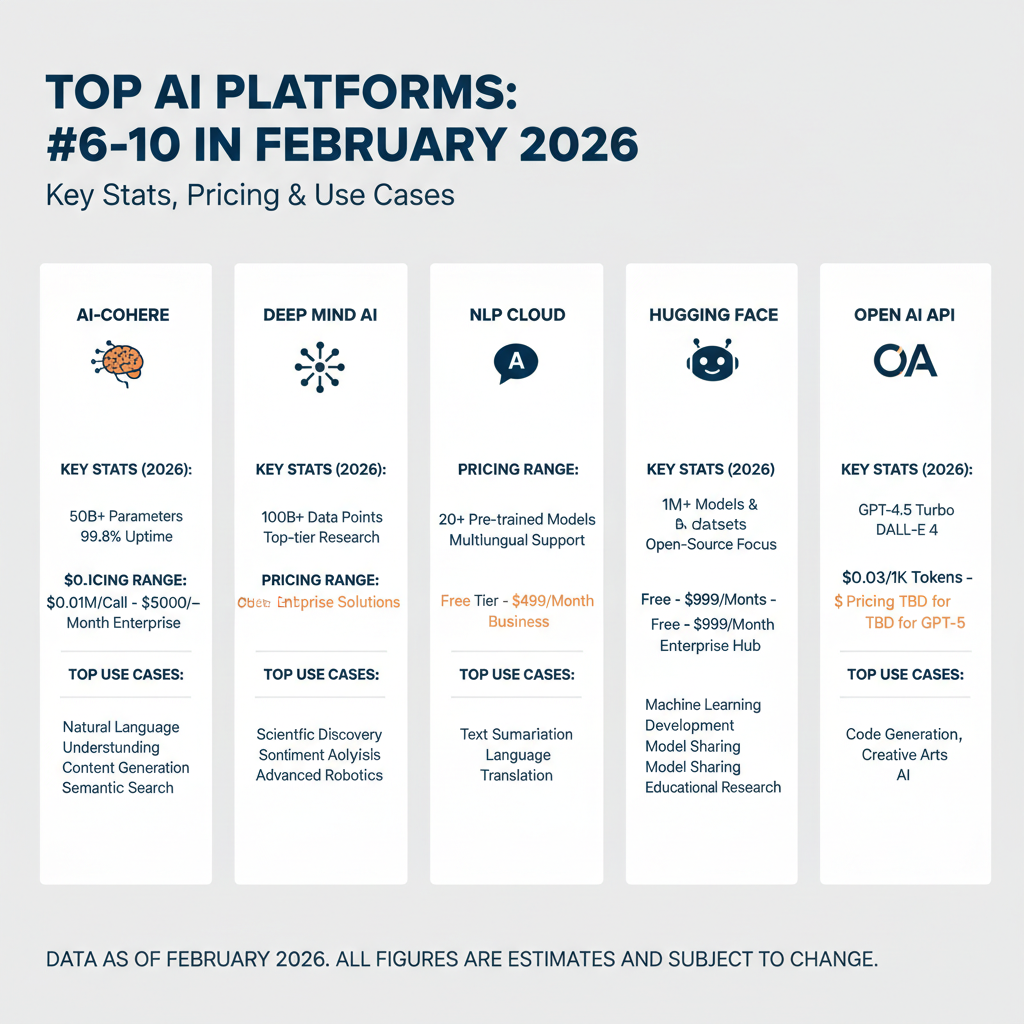

6. Databricks Mosaic AI — Data Lake RAG Mastery

Databricks Mosaic AI turns your lakehouse into an AI factory. Vector search and Unity Catalog handle private data RAG flawlessly.

Built for Delta Lake users. Notebooks + endpoints = instant apps.

Adoption: 40% growth in 2026 for analytics teams. Dremio reports it.

Key features:

If BI tools feed your models, this wins. No data movement needed.

Not for you? General chatbots. Too data-centric.

Pros: Data governance baked in. Scales with Spark. Cost-optimized GPUs.

Cons: Requires Databricks commitment. Steep for beginners.

Pricing: DBUs + GPU hours. $0.07–$0.50/DBU. Endpoints from $1/hour.

Perfect for enterprise data teams. Accelerates insights 3x.

7. NVIDIA AI Enterprise + NIM — GPU-Optimized Hybrid Deployments

NVIDIA’s stack crushes low-latency inference. NIM microservices run Llama or Mixtral on your GPUs.

NeMo optimizes for Hopper/Blackwell chips. 10x speedups common.

2026 fact: 60% of on-prem AI uses NVIDIA. DGX Cloud bridges to cloud.

Key features:

- Trition Inference Server.

- NeMo Guardrails.

- Blueprints for RAG/agents.

Hybrid setups? This owns it. Edge to cloud seamless.

Skip if cloud-only. Hardware lock-in.

Pros: Insane performance. Open models optimized. Full stack (hardware+software).

Cons: $$$ upfront. Needs GPU expertise.

Pricing: $4500/year per GPU pair + usage. Cloud from $4.50/hour.

Go NVIDIA for speed-critical apps like autonomous driving.

8. Cohere Platform — Private RAG for Enterprise Knowledge

Cohere focuses on secure text intelligence. Command R+ nails enterprise search with zero data retention.

Strict privacy controls. Perfect for corp intranets.

Usage: Up 50% in legal/finance sectors, 2026 Forrester data.

Key features:

- Retrieval API.

- Fine-tuned classifiers.

- Multi-language support.

Your private docs? Cohere builds assistants on them. No leaks.

Limited? Fewer general models.

Pros: High accuracy RAG. SOC2 compliant. Easy embedding.

Cons: Niche focus. Less versatile.

Pricing: $1–$20/million tokens. Custom enterprise.

Ideal for knowledge bases. Secure and sharp.

9. Hugging Face Hub + Inference — Open-Source Playground

Hugging Face is the model zoo. 500K+ models, plus paid endpoints for prod.

Great for testing Llama 3.1 or distilling custom ones.

Community: 1M+ devs monthly. Fastest innovation hub.

Key features:

- Spaces for demos.

- AutoTrain for no-code.

- Private hubs.

Budget tight? Start here. Free tier rocks.

Prod scale? Endpoints add cost.

Pros: Open everything. Cheap experiments. Gradio UI.

Cons: Self-manage security. Variable quality.

Pricing: Free hub; endpoints $0.50/hour+. Pro $9/month.

Use for prototyping. Then migrate up.

10. IBM watsonx — Compliance King for Regulated Industries

watsonx prioritizes governance. Audit trails, bias checks, policy enforcement throughout.

Banks and hospitals flock here. Granite models are open-weight.

2026: 20% regulated AI market share. Steady climber.

Key features:

- watsonx.governance.

- Hybrid cloud.

- Federated learning.

Regulations looming? This covers you.

Not flashy. Basic reasoning lags leaders.

Pros: Full lifecycle compliance. Open models. Multi-cloud.

Cons: Slower innovation. Higher cost.

Pricing: Capacity-based. $100–$1000/month per vCPU.

Essential for high-stakes sectors. Peace of mind sells.

Quick Comparison: Best AI Platforms Side-by-Side

Not all platforms fit every need. This table breaks it down.

| Platform | Best For | Pricing Tier | Model Variety | Key Strength |

|---|---|---|---|---|

| OpenAI | Prototyping | $$ | High | Speed |

| Vertex AI | MLOps | $$ | Medium-High | Data Tools |

| Azure AI | Enterprise | $$ | High | Security |

| AWS Bedrock | Scale | $$ | Very High | Control |

| Claude | Reasoning | $$ | Medium | Safety |

| Databricks | RAG | $$$ | Medium | Data Native |

| NVIDIA | Performance | $$$ | High | GPU Speed |

| Cohere | Privacy | $$ | Medium-High | RAG Focus |

| Hugging Face | Open Source | $ | Highest | Community |

| watsonx | Compliance | $$$ | Medium | Governance |

Not Just Platforms — When to Pick Single Tools Instead

Sometimes a full platform overkills it. Here’s when to pivot.

Need pure reasoning? Claude or o1-preview solo via API. Skip the console.

Coding IDE? Cursor AI composes multi-file edits better than platforms.

Video gen? HeyGen for avatars, not a full stack.

Office boost? Copilot in Excel crushes data tasks. No platform needed.

Point is, match the tool to your bottleneck. Platforms shine for end-to-end builds.

FAQ

What are the absolute best AI platforms in 2026?

OpenAI, Vertex AI, Azure AI Studio, AWS Bedrock, and Claude lead. They cover 80% of use cases from prototyping to enterprise scale.

How much do the best AI platforms cost?

Expect $0.001–$0.06 per 1K tokens for inference. Enterprise adds $500+/month for infra and features. Volume discounts apply.

Which best AI platform for beginners?

OpenAI or Hugging Face. Dead simple APIs and free tiers get you shipping fast.

Best AI platforms for enterprise security?

Azure AI Studio and IBM watsonx. Built-in compliance beats retrofits.

Can I switch between best AI platforms easily?

Somewhat. Use open standards like OpenAI-compatible APIs. But data lock-in is real—plan ahead.

Ready to build? Pick one from the top 3 and start today. Your first agent deploys in a day.